How To Manage URLs On A Multi-Country And Multi-Language Website

July 29th, 2016

Defining a URL structure on an international website is a critical decision for every online business operating on a global scale and serving localized content in different countries. The chosen setup can have many impacts: on a technical perspective, it can limit (or not) the server architecture; on a user perspective, it can be more or less easy to be understood; on a SEO perspective, it can be more or less performing in driving qualified organic traffic to your website.

It’s interesting to notice that there is no universal strategy and even the biggest online brands differ in how they address this challange. Here below is a summary of the pros and cons of the different strategies, with a focus on the factors that have impacts on this choice.

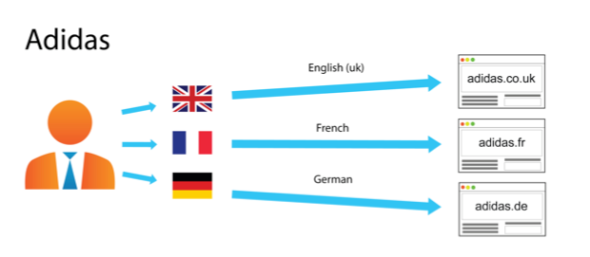

Country-Specific URL Structure Using ccTLDs

Technical perspective. This is the most expensive strategy because it requires to buy and mantain a local domain for every involved country. It can also be problematic to obtain your brand’s domain name everywhere: it may be already taken and in this case time and cost can grow dramatically. On the other hand, it is opened to different server locations and this can be very important for a big global business requiring the highest possible performance in every region.

User perspective. This is super-easy to be understood by the general Internet user. A local ccTLD generally gives the idea of a closer, faster and better service. But, on the other hand, a global brand may prefer to be known everywhere with a “.com” domain. Also, the “.com” domain is usually the main entrance for direct traffic, so you must be sure to redirect properly your users from this one (that you must own) to every local domain.

SEO perspective: since ccTLDs are the primary element that Google uses to determine a website’s targeted country, this is the easiest and more direct technique for managing optimized international URLs.

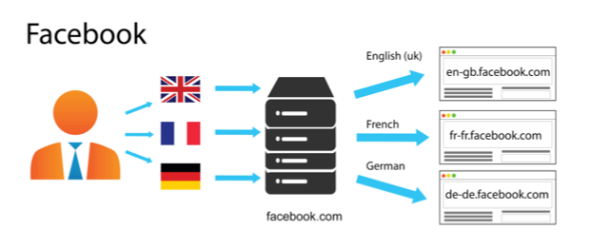

A gTLD With Country-Specific Subdomains

Technical perspective. This technique is easy to setup. It requires a certain effort for maintaining the different hosts, but it is not expensive and like the previous one is opened to a decentralized architecture with different server locations.

User perspective. Definitely not the most clear and immediate choice for your users. The well-known “www.yourname.com” structure is not preserved in its integrity.

SEO perspective. Since it does not use separate ccTLDs, other actions are required to let Google know the correct audience of your pages (e.g., Search Console geotargeting and href-lang metatags).

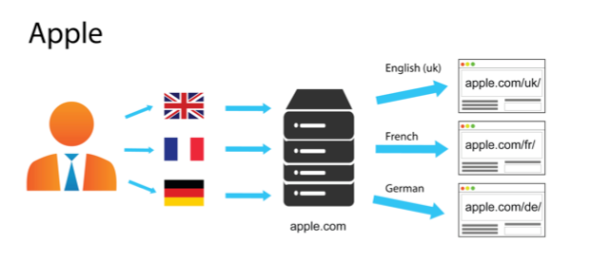

A gTLD With Country-Specific Subdirectories

Technical perspective. Very easy to setup, this technicque also requires low maintenance with the same host on all the network. On the other hand, it is not the right solution if you need to manage separate server locations (or if you are planning to do it in the future).

User perspective. It is quite clear for the user and it’s probably the best choice for a global brand that wants to be known and visible everywhere with a clear “www.yourname.com” domain.

SEO perspective. Same considerations of the previous technicque.

Querystring Parameters For Country Localization

This tecnhnique is not recommended. On a user perspective, localization is not easy recongnizable. But the most important cons are related to SEO: querystring parameters are often ignored by search engines and at the moment, if your URLs are built this way, it’s not possible to use the Google Search Console for geotargeting. Then, why Twitter is using this technique? I can imagine that they decided to do it when SEO was not so important for them.

What does Google use for geotargeting a page?

Google generally uses the following signals to define a website’s targeted country and language, in order of priority:

- Country-code top-level domain names (ccTLDs). Websites with a local ccTLD are generally treated as local content by Google. But pay attention to some so-called “vanity ccTLDs” (such as .tv, .me, etc.): they are treated as generic and they do not have the same local value in terms of SEO. See a full list of domains Google treats as generic. Also note that regional top-level domains such as .eu or .asia are also treated by Google as generic top-level domains.

- Geotargeting settings. If your site has a generic top-level domain name you can use the geotargeting settings in Google Search Console to indicate to Google the targeted country of your website.

- Server location (through the IP address of the server). The server location can be used as a signal about your site’s targeted audience. Anyway, Google is aware that many websites use distributed CDNs or are hosted in countries offering a better infrastructure, so it is not a definitive signal.

- Other signals. Pay attention to the local addresses and phone numbers on the pages, to the currency (especially for online stores), to links from other local sites: they are all used by Google to determine your website’s country and language localization. Also, if your website has any kind of physical touchpoint, be sure to use Google My Business properly.

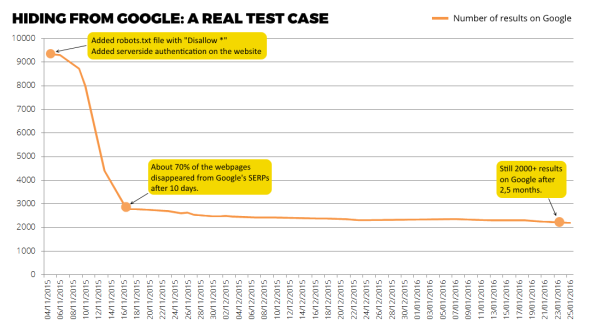

My notes from a real case

In 2015 we choosed the third option when planning the final domain migration for KIKO Milano’s e-commerce network, that reached a global scale with the opening of the US website: previously, we had a specific ccTLD for every country, but that was unsatisfactory for technical, legal and branding reasons. So we moved everything under a “.com” domain, using subfolders for managing the different countries (and languages). In this case, I recommend the following actions (that we did):

- Use an IP detection engine. We placed it on every page, but it’s important at least on your default “country selection” home page so you can redirect your users to the most proper subfolder, corresponding to the country they are browsing from (plus the language of their browser).

- Do your homework on Google Search Console. Remember to add every website to the Google Search Console (previously known as Google Webmaster Tools) and add the geotargeting settings for each one of them, in order to get more qualified traffic from Google.

- Try to own your brand everywhere. Even if we moved KIKO on a global “.com” domain, we always try to own our local branded domain in every country which is relevant for the business and redirect it to our website. This is a typical brand protection activity, but it is also important for maximizing direct traffic without losing any of those users that are looking for your website via try-and-guess in the URL bar of their browser.